System-get-ontapi-version returns a much nicer result with major and minor version children. I would prefer not to rely on parsing out the release date string is possible. Is there another way to get this information, e.g. Could I correlate version info returned in the system-get-ontapi-version call to a specific version of Data ONTAP? NetApp® ONTAP® 9 unifies data management across flash, disk, and cloud to simplify your storage environment. It bridges current enterprise workloads and new emerging applications. It builds the foundation for a Data Fabric, making it easy to move your data. Flexible Deployments from Edge to Core to Cloud Manage data consistently across your hybrid cloud. ONTAP software provides the rock-solid foundation for data management on the broadest range of deployment options—from engineered systems to commodity servers to the cloud.

- / NetApp Announces ONTAP 9! All Release Info Here! This has a lot to do with the upcoming release of ONTAP Select, the version of ONTAP that will run on white box.

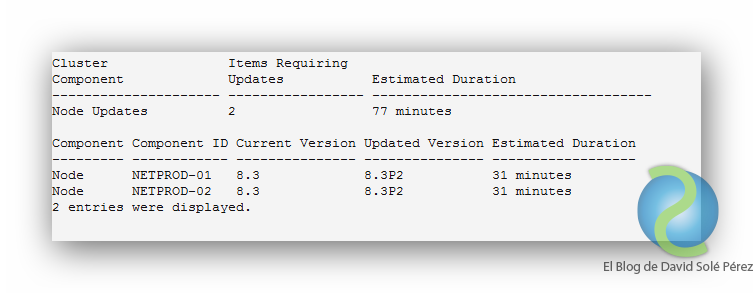

- After all of the HA pairs have been upgraded, you must use the version command to verify that all of the nodes are running the target release. About this task The cluster version is the lowest version of Data ONTAP running on any node in the cluster.

- The ONTAP command-line interface (CLI) provides a command-based view of the management interface. You enter commands at the storage system prompt, and command results are displayed in text.

(Redirected from Data ONTAP)

| Developer | NetApp |

|---|---|

| OS family | Unix-like (BSD) (Data ONTAP GX, Data ONTAP 8, and later) |

| Working state | Active |

| Platforms | IA-32 (no longer supported), Alpha (no longer supported), MIPS (no longer supported), x86-64 with ONTAP 8 and higher |

| Kernel type | Monolithic with dynamically loadable modules |

| Userland | BSD |

| Default user interface | Command-line interface (PowerShell, SSH, Serial console) Graphical user interfaces over Web-based user interfaces, REST API |

| Preceded by | Clustered Data ONTAP |

| Official website | www.netapp.com/us/products/data-management-software/ontap.aspx |

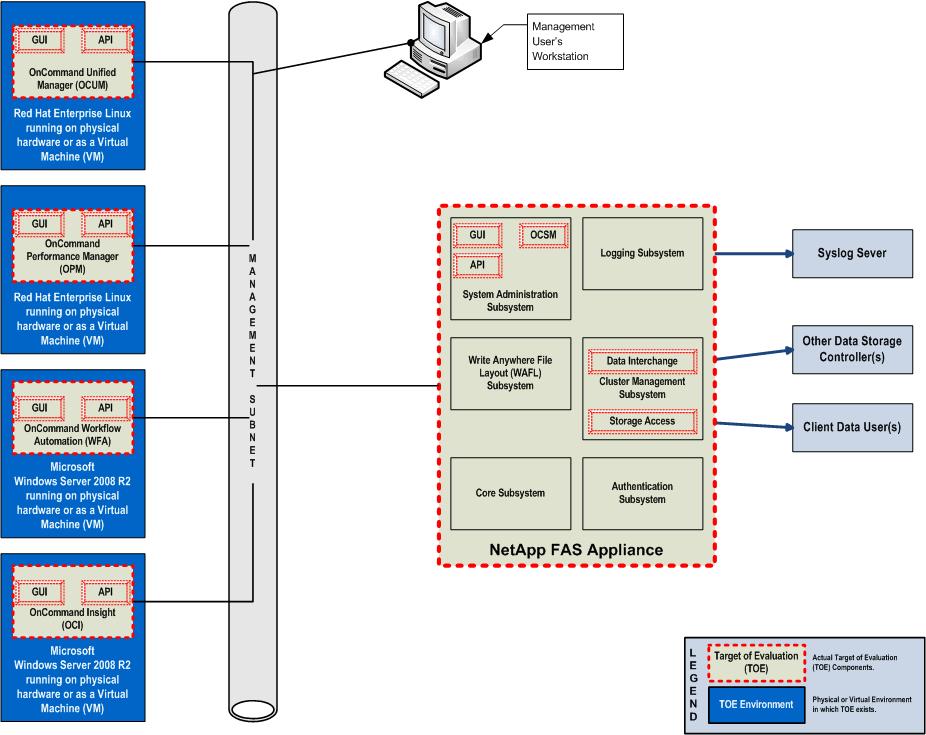

ONTAP or Data ONTAP or Clustered Data ONTAP (cDOT) or Data ONTAP 7-Mode is NetApp's proprietary operating system used in storage disk arrays such as NetApp FAS and AFF, ONTAP Select and Cloud Volumes ONTAP. With the release of version 9.0, NetApp decided to simplify the Data ONTAP name and removed word 'Data' from it and remove 7-Mode image, therefore, ONTAP 9 is successor from Clustered Data ONTAP 8.

ONTAP includes code from Berkeley Net/2 BSD Unix, Spinnaker Networks technology and other operating systems.[1]ONTAP originally only supported NFS, but later added support for SMB, iSCSI and Fibre Channel Protocol (including Fibre Channel over Ethernet and FC-NVMe). In June 16, 2006,[2] NetApp released two variants of Data ONTAP, namely Data ONTAP 7G and, with nearly a complete rewrite,[1] Data ONTAP GX. Data ONTAP GX was based on grid technology acquired from Spinnaker Networks. In 2010 these software product lines merged into one OS - Data ONTAP 8, which folded Data ONTAP 7G onto the Data ONTAP GX cluster platform.

Data ONTAP 8 includes two distinct operating modes held on a single firmware image. The modes are called ONTAP 7-Mode and ONTAP Cluster-Mode. The last supported version of ONTAP 7-Mode issued by NetApp was version 8.2.5. All subsequent versions of ONTAP (version 8.3 and onwards) have only one operating mode - ONTAP Cluster-Mode.

The majority of large-storage arrays from other vendors tend to use commodity hardware with an operating system such as Microsoft Windows Server, VxWorks or tuned Linux. NetApp storage arrays use highly customized hardware and the proprietary ONTAP operating system, both originally designed by NetApp founders David Hitz and James Lau specifically for storage-serving purposes. ONTAP is NetApp's internal operating system, specially optimized for storage functions at high and low level. The original version of ONTAP had a proprietary non-UNIX kernel and a TCP/IP stack, networking commands, and low-level startup code from BSD.[3][1] The version descended from Data ONTAP GX boots from FreeBSD as a stand-alone kernel-space module and uses some functions of FreeBSD (for example, it uses a command interpreter and drivers stack).[1] ONTAP is also used for virtual storage appliances (VSA), such as ONTAP Select and Cloud Volumes ONTAP, both of which are based on a previous product named Data ONTAP Edge.

All storage array hardware include battery-backed non-volatile memory,[4] which allows them to commit writes to stable storage quickly, without waiting on disks while virtual storage appliances using virtual nonvolatile memory.

Implementers often organize two storage systems in a high-availability cluster with a private high-speed link, either a Fibre Channel, InfiniBand, 10 Gigabit Ethernet, 40 Gigabit Ethernet or 100 Gigabit Ethernet. One can additionally group such clusters under a single namespace when running in the 'cluster mode' of the Data ONTAP 8 operating system or on ONTAP 9.

Data ONTAP was made available for commodity computing servers with x86 processors, running atop VMware vSphere hypervisor, under the name 'ONTAP Edge'.[5] Later ONTAP Edge was renamed to ONTAP Select and KVM added as supported hypervisor.

- 2WAFL File System

- 4Clustered ONTAP

- 4.1Data protocols

- 4.1.5NVMeoF

- 4.2High Availability

- 4.3Horizontal Scaling Clusterization

- 4.8SnapMirror

- 4.16Security

- 4.1Data protocols

- 5Software

- 7Platforms

- 7.2Software-Defined Storage

History[edit]

Data ONTAP, including WAFL, was developed in 1992 by David Hitz, James Lau,[6] and Michael Malcolm.[7] Initially, it supported NFSv2; the CIFS protocol was introduced to Data ONTAP 4.0 in 1996.[8] In April 2019, Octavian Tanase SVP ONTAP, posted a preview photo in his twitter of ONTAP running in Kubernetes as a container for a demonstration.

WAFL File System[edit]

The Write Anywhere File Layout (WAFL) is a file layout used by ONTAP OS that supports large, high-performance RAID arrays, quick restarts without lengthy consistency checks in the event of a crash or power failure, and growing the size of the filesystems quickly.

Storage Efficiencies[edit]

Inline Adaptive Compression & Inline Data Compaction

ONTAP OS contains a number of storage efficiencies, which are based on WAFL functionalities. Supported by all protocols, does not require licenses. In February 2018[9] NetApp claims AFF systems for its clients gain average 4.72:1 Storage Efficiency from deduplication, compression, compaction and clones savings. Starting with ONTAP 9.3 offline deduplication and compression scanners start automatically by default and based on percentage of new data written instead of scheduling.

- Data Reduction efficiency is summary of Volume and Aggregate Efficiencies and Zero-block deduplication:

- Volume Efficiencies could be enabled/disabled individually and on volume-by-volume basis:

- Offline Volume Deduplication, which works on 4KB block level

- Additional efficiency mechanism were introduced later, such as Offline Volume Compression also known as Post-process (or Background) Compression, there are two types: Post-process secondary compression and Post-process adaptive compression

- Inline Volume Deduplication and Inline Volume Compression are compress some of the data on the fly before it reaches the disks and designed to leave some of the data in uncompressed form if it considered by ONTAP to take a long time to process them on the fly, and to leverage other storage efficiency mechanisms for this uncompressed data later. There are two types of Inline Volume Compression: Inline adaptive compression and Inline secondary compression

- Aggregate Level Storage Efficiencies includes:

- Data Compaction is another mechanism used to compress many data blocks smaller than 4KB to a single 4KB block

- Inline Aggregate-wide data deduplication (IAD) and Post-process aggregate deduplication also known as Cross-Volume Deduplication [10] shares common blocks between volumes on an aggregate. IAD can throttle itself when storage system crosses a certain threshold. The current limit of physical space of a single SSD aggregate is 800TiB

- Inline Zero-block Deduplication[11] deduplicate zeroes on the fly before they reach disks

- Volume Efficiencies could be enabled/disabled individually and on volume-by-volume basis:

- Snapshots and FlexClones are also considered efficiency mechanisms. Starting with 9.4 ONTAP by default deduplicate data across active file system and all the snapshots on the volume, saving from snapshot sharing is a magnitude of number of snapshots, the more snapshots the more savings will be, therefore snapshot sharing gives more savings on SnapMirror destination systems.

- Thin Provisioning

Cross-Volume Deduplication storage efficiency features work only for SSD media. Inline and Offline Deduplication mechanisms that leverage databases consist of links of data blocks and checksums, for those data blocks that been handled by the deduplication process. Each deduplication database is located on each volume and aggregates where deduplication is enabled. All Flash FAS systems does not support Post-process Compression.

Order of Storage Efficiencies execution is as follows:

- Inline Zero-Block deduplication

- Inline Compression: for files that could be compressed to the 8KB adaptive compression used, for files more than 32KB secondary compression used

- Inline Deduplication: Volume first, then Aggregate

- Inline Adaptive Data Compaction

- Post-process Compression

- Post-process Deduplication: Volume first, then Aggregate

Aggregates[edit]

WAFL FlexVol Layout on an Aggregate

Internal organization of an Aggregate with two plexes

One or multiple RAID groups form an 'aggregate', and within aggregates ONTAP operating system sets up 'flexible volumes' (FlexVol) to actually store data that users can access. Similarly to RAID-0, each aggregate consolidates space from underlying protected RAID groups to provide one logical piece of storage for flexible volumes. Alongside with aggregates consists of NetApp's own disks and RAID groups aggregates could consist of LUNs already protected with third-party storage systems with FlexArray, ONTAP Select or Cloud Volumes ONTAP. Each aggregate could consist of either LUNs or with NetApp's own RAID groups. An alternative is 'Traditional volumes' where one or more RAID groups form a single static volume. Flexible volumes offer the advantage that many of them can be created on a single aggregate and resized at any time. Smaller volumes can then share all of the spindles available to the underlying aggregate and with a combination of storage QoS allows to change the performance of flexible volumes on the fly while Traditional volumes don't. However, Traditional volumes can (theoretically) handle slightly higher I/O throughput than flexible volumes (with the same number of spindles), as they do not have to go through an additional virtualization layer to talk to the underlying disk. Aggregates and traditional volumes can only be expanded, never contracted. Current maximum aggregate physical useful space size is 800 TiB for All-Flash FAS Systems.[12]

7-Mode and earlier[edit]

The first form of redundancy added to ONTAP was the ability to organize pairs of NetApp storage systems into a high-availability cluster (HA-Pair);[13] an HA-Pair could scale capacity by adding disk shelves. When the performance maximum was reached with an HA-Pair, there were two ways to proceed: one was to buy another storage system and divide the workload between them, another was to buy a new, more powerful storage system and migrate all workload to it. All the AFF and FAS storage systems were usually able to connect old disk shelves from previous models—this process is called head-swap. Head-swap requires downtime for re-cabling operations and provides access to old data with new controller without system re-configuration. From Data ONTAP 8, each firmware image contains two operating systems, named 'Modes': 7-Mode and Cluster-Mode.[14] Both modes could be used on the same FAS platform, one at a time. However, data from each of the modes wasn't compatible with the other, in case of a FAS conversion from one mode to another, or in case of re-cabling disk shelves from 7-Mode to Cluster-Mode and vice versa.

Later, NetApp released the 7-Mode transition Tool (7MTT), which is able to convert data on old disk shelves from 7-Mode to Cluster-Mode. It is named Copy-Free Transition,[15] a process which required downtime. With version 8.3, 7-Mode was removed from the Data ONTAP firmware image.[16]

Clustered ONTAP[edit]

Clustered ONTAP is a new, more advanced OS, compared to its predecessor Data ONTAP (version 7 and version 8 in 7-Mode), which is able to scale out by adding new HA-pairs to a single namespace cluster with transparent data migration across the entire cluster. In version 8.0, a new aggregate type was introduced, with a size threshold larger than the 16-terabyte (TB) aggregate size threshold that was supported in previous releases of Data ONTAP, also named the 64-bit aggregate.[17]

In version 9.0, nearly all of the features from 7-mode were successfully implemented in ONTAP (Clustered) including SnapLock,[18] while many new features that were not available in 7-Mode were introduced, including features such as FlexGroup, FabricPool, and new capabilities such as fast-provisioning workloads and Flash optimization.[19]

The uniqueness of NetApp's Clustered ONTAP is in the ability to add heterogeneous systems (where all systems in a single cluster do not have to be of the same model or generation) to a single cluster. This provides a single pane of glass for managing all the nodes in a cluster, and non-disruptive operations such as adding new models to a cluster, removing old nodes, online migration of volumes, and LUNs while data is contiguously available to its clients.[20] In version 9.0, NetApp renamed Data ONTAP to ONTAP.

Data protocols[edit]

ONTAP is considered to be a unified storage system, meaning that it supports both block-level (FC,FCoE, NVMeoF and iSCSI) & file-level (NFS, pNFS, CIFS/SMB) protocols for its clients. SDS versions of ONTAP (ONTAP Select & Cloud Volumes ONTAP) do not support FC, FCoE or NVMeoF protocols due to their software-defined nature.

NFS[edit]

NFS was the first protocol available in ONTAP. The latest versions of ONTAP 9 support NFSv2, NFSv3, NFSv4 (4.0 and 4.1) and pNFS. Starting with ONTAP 9.5, 4-byte UTF-8 sequences, for characters outside the Basic Multilingual Plane, are supported in names for files and directories.[21]

SMB/CIFS[edit]

ONTAP supports CIFS 2.0 and higher up to SMB 3.1. Starting with ONTAP 9.4 SMB Multichannel, which provides functionality similar to multipathing in SAN protocols, is supported. Starting with ONTAP 8.2 CIFS protocol supports Continuous Availability (CA) with SMB 3.0 for Microsoft Hyper-V over SMB and SQL Server over SMB. ONTAP supports SMB encryption, which is also known as sealing. Accelerated AES instructions (Intel AES NI) encryption is supported in SMB 3.0 and later.

FCP[edit]

ONTAP on physical appliances supports FCoE as well as FC protocol, depending on HBA port speed.

iSCSI[edit]

iSCSI Data Center Bridging (DCB) protocol supported with A220/FAS2700 systems.

NVMeoF[edit]

NVMe over Fabrics (NVMeoF) refers to the ability to utilize NVMe protocol over existed network infrastructure like Ethernet (Converged or traditional), TCP, Fiber Channel or InfiniBand for transport (as opposite to run NVMe over PCI). NVMe is SAN block level data storage protocol. NVMeoF supported only on All-Flash A-Systems and not supported for low-end A200 and A220 systems. Starting with ONTAP 9.5 ANA protocol supported which provide, similarly to ALUA multi-pathing functionality to NVMe. ANA for NVMe currently supported only with SUSE Enterprise Linux 15. FC-NVMe without ANA supported with SUSE Enterprise Linux 12 SP3 and RedHat Enterprise Linux 7.6.

FC-NVMe[edit]

Starting with ONTAP 9.4 NetApp supports NVMeoF with Fiber Channel protocol 32/16 Gb (FC-NVMe) for its All-Flash FAS systems A800, A700/A700s, and A300. Supported OS with FC-NVMe are: Oracle Linux, VMware, Windows Server, SUSE Linux, RedHat Linux.

High Availability[edit]

High Availability (HA) is clustered configuration of a storage system with two nodes or HA pairs, which aims to ensure an agreed level of operational during expected and unexpected events like reboots, software or firmware updates.

HA Pair[edit]

Even though a single HA pair consists of two nodes (or controllers), NetApp has designed it in such a way that it behaves as a single storage system. HA configurations in ONTAP employ a number of techniques to present the two nodes of the pair as a single system. This allows the storage system to provide its clients with nearly-uninterruptable access to their data should a node either fail unexpectedly or need to be rebooted in an operation known as a 'takeover.'

For example: on the network level, ONTAP will temporarily migrate the IP address of the downed node to the surviving node, and where applicable it will also temporarily switch ownership of FC WWPNs from the downed node to the surviving node. On the data level, the contents of the disks that are assigned to the downed node will automatically be available for use via the surviving node.

FAS and AFF storage systems use enterprise level HDD and SSD drives that are housed within disk shelves that have two bus ports, with one port connected to each controller. All of ONTAP's disks have an ownership marker written to them to reflect which controller in the HA pair owns and serves each individual disk. An Aggregate can include only disks owned by a single node, therefore each aggregate owned by a node and any upper objects such as FlexVol volumes, LUNs, File Shares are served with a single controller. Each controller can have its own disks and aggregates and serve them, therefore such HA pair configurations are called Active/Active where both nodes are utilized simultaneously even though they are not serving the same data.

Once the downed node of the HA pair has been repaired, or whatever maintenance window that necessitated a takeover has been completed, and the downed node is up and running without issue, a 'giveback' command can be issued to bring the HA pair back to 'Active/Active' status.

HA interconnect[edit]

High-availability clusters (HA clusters) are the first type of clusterization introduced in ONTAP systems. It aimed to ensure an agreed level of operation. It is often confused with the horizontal scaling ONTAP clusterization that came from the Spinnaker acquisition; therefore, NetApp, in its documentation, refers to an HA configuration as an HA pair rather than as an HA cluster.

An HA pair uses some form of network connectivity (often direct connectivity) for communication between the servers in the pair; this is called an HA interconnect (HA-IC). The HA interconnect can use Ethernet or InfiniBand as the communication medium. The HA interconnect is used for non-volatile memory log (NVLOG) replication using RDMA technology and for some other purposes only to ensure an agreed level of operational during events like reboots always between two nodes in a HA pair configuration. ONTAP assigns dedicated, non-sharable HA ports for HA interconnect which could be external or build in chassis (and not visible from the outside). The HA-IC should not be confused with the intercluster or intracluster interconnect that is used for SnapMirror and that can coexist with data protocols on data ports or with Cluster Interconnect ports used for horizontal scaling & online data migration across the multi-node cluster. HA-IC interfaces are visible only on the node shell level. Starting with A320 HA-IC and Cluster interconnect traffic start to use the same ports.

MetroCluster[edit]

MetroCluster local and DR pare memory replication in NetApp FAS/AFF systems configured as MCC

MetroCluster (MC) is an additional level of data availability to HA configurations and supported only with FAS and AFF storage systems, later SDS version of MetroCluster was introduced with ONTAP Select & Cloud Volumes ONTAP products. In MC configuration two storage systems (each system can be single node or HA pair) form MetroCluster, often two systems located on two sites with the distance between them up to 300 km therefor called geo-distributed system. Plex is the key underlying technology which synchronizes data between two sites in MetroCluster. In MC configurations NVLOG also replicated between storage systems between sites but uses dedicated ports for that purpose, in addition to HA interconnect. Starting with ONTAP 9.5 SVM-DR supported in MetroCluster configurations.

MetroCluster SDS[edit]

Is a feature of ONTAP Select software, similarly to MetroCluster on FAS/AFF systems MetroCluster SDS (MC SDS) allows to synchronously replicate data between two sites using SyncMirror and automatically switch to survived node transparently to its users and applications. MetroCluster SDS work as ordinary HA pare so data volumes, LUNs and LIFs could be moved online between aggregates and controllers on both sites, which is slightly different than traditional MetroCluster on FAS/AFF systems where data cloud be moved across storage cluster only within site where data originally located. In traditional MetroCluster the only way for applications to access data locally on remote site is to disable one entire site, this process called switchover where in MC SDS ordinary HA process occurs. MetroCluster SDS uses ONTAP Deploy as the mediator (in FAS and AFF world this functionality known as MetroCluster tiebreaker) which came with ONTAP Select as a bundle and generally used for deploying clusters, installing licenses and monitoring them.

Horizontal Scaling Clusterization[edit]

Horizontal scaling ONTAP clusterization came from Spinnaker acquisitions and often referred by NetApp as 'Single Namespace', 'Horizontal Scaling Cluster' or 'ONTAP Storage System Cluster' or just 'ONTAP Cluster' and therefore often confused with HA pair or even with MetroCluster functionality. While MetroCluster and HA are Data Protection technologies, single namespace clusterization does not provide data protection. ONTAP Cluster is formed out of one or few HA pairs and adds to ONTAP system Non-Disruptive Operations (NDO) functionality such as non-disruptive online data migration across nodes in the cluster and non-disruptive hardware upgrade. Data migration for NDO operations in ONTAP Cluster require dedicated Ethernet ports for such operations called as cluster interconnect and does not use HA interconnect for this purposes. Cluster interconnect and HA interconnect could not share same ports. Cluster interconnect with a single HA pair could have directly connected cluster interconnect ports while systems with 4 or more nodes require two dedicated Ethernet cluster interconnect switches. ONTAP Cluster could consist only with even number of nodes (they must be configured as HA pairs) except for Single-node cluster. Single-node cluster ONTAP system also called non-HA (stand-alone). ONTAP Cluster managed with a single pain of glass built-in management with Web-based GUI, CLI (SSH and PowerShell) and API. ONTAP Cluster provides Single Name Space for NDO operations through SVM. Single Namespace in ONTAP system is a name for collection of techniques used by Cluster to separate data from front-end network connectivity with data protocols like FC, FCoE, FC-NVMe, iSCSI, NFS and CIFS and therefore provide kind of data virtualization for online data mobility across cluster nodes. On network layer Single Namespace provide a number of techniques for non-disruptive IP address migration, like CIFS Continuous Availability (Transparent Failover), NetApp's Network Failover for NFS and SAN ALUA and path election for online front-end traffic re-balancing with data protocols. NetApp AFF and FAS storage systems can consists of different HA pairs: AFF and FAS, different models and generations and can include up to 24 nodes with NAS protocols or 12 nodes with SAN protocols. SDS systems can't intermix with physical AFF or FAS storage systems.

Storage Virtual Machine[edit]

Storage Virtual Machine

Also known as Vserver or sometimes SVM. Storage Virtual Machine (SVM) is a layer of abstraction and alongside with other functions, it is responsible of virtualizing and separate physical front-end data network from data located on FlexVol volumes. Used for Non-Disruptive Operations and Multi-Tenancy.

Enochian calls pdf. AN INTRODUCTION TO ENOCHIAN MAGICK by Christeos Pir (Text of a seminar given at Ecumenicon/Sacred Space VIII, an occult/pagan-oriented. 'So, what is Enochian Magick? Simply put, it is an approach to ceremonial magick based on the alleged conversations between Dr. John Dee, an Elizabethan scientist and magician, his assistant. The System of Enochian Magick, Part I: An Introduction to the Structure of Enochian Magick By Frater David R. Reprinted from Voume 6, Number 3 of Lion and Serpent, the Official Journal of Sekhet-Maat Lodge, O.T.O. Reprinted here with Permission. What follows is the beginning of a work in progress; any and all questions, suggestions and corrections are heartily encouraged.

Non Disruptive Operations[edit]

SAN ALUA in ONTAP: preferred path with direct data link

There few Non Disruptive Operations (NDO) operations with (Clustered) ONTAP system. NDO data operations include: aggregate relocation within an HA pair between nodes, FlexVol volume online migration (known as Volume Move operation) across aggregates and nodes within Cluster, LUNs migration (known as LUN Move operation) between FlexVol volumes within Cluster. LUN move and Volume Move operations use Cluster Interconnect ports for data transfer (HA-CI is not in use for such operations). SVM behave differently with network NDO operations, depending on front-end data protocol. To decrease latency to its original level FlexVol volumes and LUNs have to be located on the same node with network address through which the clients access storage system, so network address could be created for SAN or moved for NAS protocols. NDO operations are free functionality.

NAS LIF[edit]

For NAS front-end data protocols there are NFSv2, NFSv3, NFSv4 and CIFSv1, SMBv2 and SMB v3 which do not provide network redundancy with the protocol itself, so they rely on storage and switch functionalities for this matter. For this reason ONTAP support Ethernet Port Channel and LACP with its Ethernet network ports on L2 layer (known in ONTAP as interface group, ifgrp), within a single node and also non-disruptive Network Fail Over between nodes in cluster on L3 layer with migrating Logical Interfaces (LIF) and associated IP addresses (similar to VRRP) to survived node and back home when failed node restored.

SAN LIF[edit]

For front-end data SAN protocols. ALUA feature used for network load balancing and redundancy in SAN protocols so all the ports on node where data located are reported to clients as active preferred path with load balancing between them while all other network ports on all other nodes in the cluster are active non-preferred path so in case of one port or entire node goes down, client will have access to its data using non-preferred path. Starting with ONTAP 8.3 Selective LUN Mapping (SLM) was introduced to reduce the number of paths to the LUN and removes non-optimized paths to the LUN through all other cluster nodes except for HA partner of the node owning the LUN so cluster will report to the host paths only from the HA pare where LUN is located. Because ONTAP provides ALUA functionality for SAN protocols, SAN network LIFs do not migrate like with NAS protocols. When data or network interfaces migration is finished it is transparent to storage system's clients due to ONTAP Architecture and can cause temporary or permanent data indirect access through ONTAP Cluster interconnect (HA-CI is not in use for such situations) which will slightly increase latency for the clients. SAN LIFs used for FC, FCoE, iSCSi & FC-NVMe protocols.

VIP LIF[edit]

VIP (Virtual IP) LIFs require Top-of-the-Rack BGP Router used. BGP data LIFs alongside with NAS LIFs also can be used with Ethernet for NAS environment but in case of BGP LIFs, automatically load-balance traffic based on routing metrics and avoid inactive, unused links. BGP LIFs provide distribution across all the NAS LIFs in a cluster, not limited to a single node as in NAS LIFs. BGP LIFs provide smarter load balancing than it was realized with hash algorithms in Ethernet Port Channel & LACP with interface groups. VIP LIF interfaces are tested and can be used with MCC and SVM-DR.

Management interfaces[edit]

Node management LIF interface can migrate with associated IP address across Ethernet ports of a single node and available only while ONTAP running on the node, usually located on e0M port of the node; Node management IP sometimes used by cluster admin to communicate with a node to cluster shell in rare cases where commands have to be issued from a particular node. Cluster Managment LIF interface with associated IP address available only while the entire cluster is up & running and by default can migrate across Ethernet ports, often located on one of the e0M ports on one of the cluster nodes and used for cluster administrator for management purposes; it used for API communications & HTML GUI & SSH console management, by default ssh connect administrator with cluster shell. Service Processor (SP) interfaces available only at hardware appliances like FAS & AFF and allows ssh out-of-band console communications with an embedded small computer installed on controller mainboard and similarly to IPMI allows to connect, monitor & manage controller even if ONTAP OS is not booted, with SP it is possible to forcibly reboot or halt a controller and monitor coolers & temperature, etc.; connection to SP by ssh brings administrator to SP console but when connected to SP it is possible to switch to cluster shell through it; each controller has one SP which does not migrate like some other management interfaces. Usually, e0M and SP both lives on a single management (wrench) physical Ethernet port but each has its own dedicated MAC address. Node LIFs, Cluster LIF & SP often using the same IP subnet. SVM management LIF, similarly to cluster management LIF can migrate across all the Ethernet ports on the nodes of the cluster but dedicated for a single SVM management; SVM LIF does not have GUI capability and can facilitate only for API Communications & SSH console management; SVM management LIF can live on e0M port but often located on a data port of a cluster node on a dedicated management VLAN and can be different from IP subnets that node & cluster LIFs.

Cluster interfaces[edit]

The cluster interconnect LIF interfaces using dedicated Ethernet ports and cannot share ports with management and data interfaces and for horizontal scaling functionality at times when like a LUN or a Volume migrates from one node of the cluster to another; cluster interconnect LIF similarly to node management LIFs can migrate between ports of a single node. Intercluster interface LIFs can live and share the same Ethernet ports with data LIFs and used for SnapMirror replication; intercluster interface LIFs, similarly to node management & LIFs cluster interconnect can migrate between ports of a single node.

Multi Tenancy[edit]

Multi Tenancy

ONTAP provide two techniques for Multi Tenancy functionality like Storage Virtual Machines and IP Spaces. On one hand SVMs are similar to Virtual Machines like KVM, they provide visualization abstraction from physical storage but on another hand quite different because unlike ordinary virtual machines SVMs does not allow to run third party binary code like in Pure storage systems; they just provide virtualized environment and storage resources instead. Also SVMs unlike ordinary virtual machines do not run on a single node but for the end user it looks like an SVM runs as a single entity on each node of the whole cluster. SVM divides storage system into slices, so a few divisions or even organizations can share a storage system without knowing and interfering with each other while utilizing same ports, data aggregates and nodes in the cluster and using separate FlexVol volumes and LUNs. Each SVM can run its own frontend data protocols, set of users, use its own network addresses and management IP. With use of IP Spaces users can have the same IP addresses and networks on the same storage system without interfering. Each ONTAP system must run at least one Data SVM in order to function but may run more. There are a few levels of ONTAP management and Cluster Admin level has all of the available privileges. Each Data SVM provides to its owner vsadmin which has nearly full functionality of Cluster Admin level but lacks physical level management capabilities like RAID group configuration, Aggregate configuration, physical network port configuration. However, vsadmin can manage logical objects inside an SVM like create, delete and configure LUNs, FlexVol volumes and network addresses, so two SVMs in a cluster can't interfere with each other. One SVM cannot create, delete, modify or even see objects of another SVM, so for SVM owners such an environment looks like they are the only users in the entire storage system cluster. Multi Tenancy is free functionality in ONTAP.

FlexClone[edit]

NetApp FlexClone works exactly as NetApp RoW Snapshots but allows to write to FlexClones

FlexClone is a licensed feature, used for creating writable copies of volumes, files or LUNs. In case of volumes, FlexClone acts as a snapshot but allows to write into it, while an ordinary snapshot allows only to read data from it. Because WAFL architecture FlexClone technology copies only metadatainodes and provides nearly instantaneous data copying of a file, LUN or volume with regardless of it size.

SnapRestore[edit]

SnapRestore is a licensed feature, used for reverting active file system of a FlexVol to a previously created snapshot for that FlexVol with restoring metadata inodes in to active file system. SnapRestore is used also for a single file restore or LUN restore from a previously created snapshot for the FlexVol where that object located. Without SnapRestore license in NAS environment it is possible to see snapshots in network file share and be able to copy directories and files for restore purposes. In SAN environment there is no way of doing restore operations similar to NAS environment. It is possible to copy in both SAN and NAS environments files, directories, LUNs and entire FlexVol content with ONTAP command ndmpcopy which is free. Process of copying data depend on the size of the object and could be time consuming, while SnapRestore mechanism with restoring metadata inodes in to active file system almost instant regardless of the size of the object been restored to its previous state.

FlexGroup[edit]

FlexGroup is a free feature introduced in version 9, which utilizes the clustered architecture of the ONTAP operating system. FlexGroup provides cluster-wide scalable NAS access with NFS and CIFS protocols.[22] A FlexGroup Volume is a collection of constituent FlexVol volumes distributed across nodes in the cluster called just 'Constituents', which are transparently aggregated in a single space. Therefore, FlexGroup Volume aggregates performance and capacity from all the Constituents and thus from all nodes of the cluster where they located. For the end user, each FlexGroup Volume is represented by a single, ordinary file-share.[23] The full potential of FlexGroup will be revealed with technologies like pNFS (currently not supported with FlexGroup), NFS Multipathing (session trunking, also not available in ONTAP) SMB multichannel (currently not supported with FlexGroup), SMB Continuous Availability (FlexGroup with SMB CA Supported with ONTAP 9.6), and VIP (BGP). The FlexGroup feature in ONTAP 9 allows to massively scale in a single namespace to over 20PB with over 400 billion files, while evenly spreading the performance across the cluster.[24] Starting with ONTAP 9.5 FabricPool supported with: FlexGroup, it is recommended to have all the constituent volumes to backup to a single S3 bucket; supports SMB features for native file auditing, FPolicy, Storage Level Access Guard (SLA), copy offload (ODX) and inherited watches of changes notifications; Quotas and Qtree. SMB Contiguous Availability (CA) supported on FlexGroup allows running MS SQL & Hyper-V on FlexGroup, and FlexGroup supported on MetroCluster.

SnapMirror[edit]

Unified Replication

Snapshots form the basis for NetApp's asynchronous disk-to-disk replication (D2D) technology, SnapMirror, which effectively replicates Flexible Volume snapshots between any two ONTAP systems. SnapMirror is also supported from ONTAP to Cloud Backup and from SolidFire to ONTAP systems as part of NetApp's Data Fabric vision. NetApp also offers a D2D backup and archive feature named SnapVault, which is based on replicating and storing snapshots. Open Systems SnapVault allows Windows and UNIX hosts to back up data to an ONTAP, and store any filesystem changes in snapshots (not supported in ONTAP 8.3 and onwards). SnapMirror is designed to be part of a Disaster recovery plan: it stores an exact copy of data on time when snapshot was created on the disaster recovery site and could keep the same snapshots on both systems. SnapVault, on the other hand, is designed to store less snapshots on the source storage system and more Snapshots on a secondary site for a long period of time.

Data captured in SnapVault snapshots on destination system could not be modified nor accessible on destination for read-write, data can be restored back to primary storage system or SnapVault snapshot could be deleted. Data captured in snapshots on both sites with both SnapMirror and SnapVault can be cloned and modified with the FlexClone feature for data cataloging, backup consistency and validation, test and development purposes etc.

Later versions of ONTAP introduced cascading replication, where one volume could replicate to another, and then another, and so on. Configuration called fan-out is a deployment where one volume replicated to multiple storage systems. Both fan-out and cascade replication deployments support any combination of SnapMirror DR, SnapVault, or unified replication. It is possible to use fan-in deployment to create data protection relationships between multiple primary systems and a single secondary system: each relationship must use a different volume on the secondary system. Starting with ONTAP 9.4 destination SnapMirror & SnapVault systems enable automatic inline & offline deduplication by default.

Intercluster is a relationship between two clusters for SnapMirror, while Intracluster is opposite to it and used for SnapMirror relationship between storage virtual machines (SVM) in a single cluster.

SnapMirror can operate in version-dependent mode, where two storage systems must run on the same version of ONTAP or in version-flexible mode. Types of SnapMirror replication:

Data captured in SnapVault snapshots on destination system could not be modified nor accessible on destination for read-write, data can be restored back to primary storage system or SnapVault snapshot could be deleted. Data captured in snapshots on both sites with both SnapMirror and SnapVault can be cloned and modified with the FlexClone feature for data cataloging, backup consistency and validation, test and development purposes etc.

Later versions of ONTAP introduced cascading replication, where one volume could replicate to another, and then another, and so on. Configuration called fan-out is a deployment where one volume replicated to multiple storage systems. Both fan-out and cascade replication deployments support any combination of SnapMirror DR, SnapVault, or unified replication. It is possible to use fan-in deployment to create data protection relationships between multiple primary systems and a single secondary system: each relationship must use a different volume on the secondary system. Starting with ONTAP 9.4 destination SnapMirror & SnapVault systems enable automatic inline & offline deduplication by default.

Intercluster is a relationship between two clusters for SnapMirror, while Intracluster is opposite to it and used for SnapMirror relationship between storage virtual machines (SVM) in a single cluster.

SnapMirror can operate in version-dependent mode, where two storage systems must run on the same version of ONTAP or in version-flexible mode. Types of SnapMirror replication:

- Data Protection (DP): Also known as SnapMirror DR. Version-dependent replication type originally developed by NetApp for Volume SnapMirror, destination system must be same or higher version of ONTAP. Not used by default in ONTAP 9.3 and higher. Volume-level replication, block-based, metadata independent, uses Block-Level Engine (BLE).

- Extended Data Protection (XDP): Used by SnapMirror Unified replication and SnapVault. XDP uses the Logical Replication Engine (LRE) or if volume efficiency different on the destination volume the Logical Replication Engine with Storage Efficiency (LRSE). Used as Volume-level replication but technologically could be used for directory-based replication, inode-based, metadata dependent (therefore not recommended for NAS with millions of files).

- Load Sharing (LS): Mostly used for internal purposes like keeping copies of root volume for an SVM.

- SnapMirror to Tape (SMTape): is Snapshot copy-based incremental or differential backup from volumes to tapes; SMTape feature performing a block-level tape backup using NDMP-compliant backup applications such as CommVault Simpana.

SnapMirror-based technologies:

- Unified replication: A volume with Unified replication can get both SnapMirror and SnapVault snapshots. Unified replication is combination of SnapMirror Unified replication and SnapVault which using a single replication connection. Both SnapMirror Unified replication and SnapVault are using same XDP replication type. SnapMirror Unified Replication is also known as Version-flexible SnapMirror. Version-flexible SnapMirror/SnapMirror Unified Replication introduced in ONTAP 8.3 and removes the restriction to have the destination storage use the same, or higher, version of ONTAP.

- SVM-DR (SnapMirror SVM): replicates all volumes (exceptions allowed) in a selected SVM and some of the SVM settings, replicated settings depend on protocol used (SAN or NAS)

- Volume Move: Also known as DataMotion for Volumes. SnapMirror replicates volume from one aggregate to another within a cluster, then I/O operations stops for acceptable timeout for end clients, final replica transferred to destination, source deleted and destination becomes read-write accessible to its clients

SnapMirror is a licensed feature, a SnapVault license is not required if a SnapMirror license is already installed.

SVM-DR[edit]

SVM DR based on SnapMirror technology which transferring all the volumes (exceptions allowed) and data in them from a protected SVM to a DR site. There are two modes for SVM DR: identity preserve and identity discard. With Identity discard mode, on the one hand, data in volumes copied to the secondary system and DR SVM does not preserve information like SVM configuration, IP addresses, CIFS AD integration from original SVM. On another hand in identity discard mode, data on the secondary system can be brought online in read-write mode while primary system online too, which might be helpful for DR testing, Test/Dev and other purposes. Therefore, identity discard requires additional configuration on the secondary site in the case of disaster occurs on the primary site.

In the identity preserve mode, SVM-DR copying volumes and data in them and also information like SVM configuration, IP addresses, CIFS AD integration which requires less configuration on DR site in case of disaster event on primary site but in this mode, the primary system must be offline to ensure there will be no conflict.

SnapMirror Synchronous[edit]

SnapMirror Sync (SM-S) for short is zero RPO data replication technology previously available in 7-mode systems and was not available in (clustered) ONTAP until version 9.5. SnapMirror Sync replicates data on Volume level and has requirements for RTT less than 10ms which gives distance approximately of 150 km. SnapMirror Sync can work in two modes: Full Synchronous mode (set by default) which guarantees zero application data loss between two sites by disallowing writes if the SnapMirror Sync replication fails for any reason. Relaxed Synchronous mode allows an application to write to continue on primary site if the SnapMirror Sync fails and once the relationship resumed, automatic re-sync will occur. SM-S supports FC, iSCSI, NFSv3, NFSv4, SMB v2 & SMB v3 protocols and have the limit of 100 volumes for AFF, 40 volumes for FAS, 20 for ONTAP Select and work on any controllers which have 16GB memory or more. SM-S is useful for replicating transactional logs from: Oracle DB, MS SQL, MS Exchange etc. Source and destination FlexVolumes can be in a FabricPool aggregate but must use backup policy, FlexGroup volumes and quotas are not currently supported with SM-S. SM-S is not free feature, the license is included in the premium bundle. Unlike SyncMirror, SM-S not uses RAID & Plex technologies, therefore, can be configured between two different NetApp ONTAP storage systems with different disk type & media.

FlexCache Volumes[edit]

FlexCache technology previously available in 7-mode systems and was not available in (clustered) ONTAP until version 9.5. FlexCache allows serving NAS data across multiple global sites with file locking mechanisms. FlexCache volumes can cache reads, writes, and metadata. Writes on the edge generating push operation of the modified data to all the edge ONTAP systems requested data from the origin, while in 7-mode all the writes go to the origin and it was an edge ONTAP system's job to check the file haven't been updated. Also in FlexCache volumes can be less size that original volume, which is also an improvement compare to 7-mode. Initially, only NFS v3 supported with ONTAP 9.5. FlexCache volumes are sparsely-populated within an ONTAP cluster (intracluster) or across multiple ONTAP clusters (inter-cluster). FlexCache communicates over Intercluster Interface LIFs with other nodes. Licenses for FlexCache based on total cluster cache capacity and not included in the premium bundle. FAS, AFF & ONTAP Select can be combined to use FlexCache technology. Allowed to create 10 FlexCache volumes per origin FlexVol volume, and up to 10 FlexCache volumes per ONTAP node. The original volume must be stored in a FlexVol wile all the FlexCache Volumes will have FlexGroup volume format.

SyncMirror[edit]

SyncMirror replication using plexes

Data ONTAP also implements an option named RAID SyncMirror (RSM), using the plex technique, where all the RAID groups within an aggregate or traditional volume can be synchronously duplicated to another set of hard disks. This is typically done at another site via a Fibre Channel or IP link, or within a single controller with local SyncMirror for a single disk-shelf resiliency. NetApp's MetroCluster configuration uses SyncMirror to provide a geo-cluster or an active/active cluster between two sites up to 300 km apart or 700 km with ONTAP 9.5 and MCC-IP. SyncMirror can be used either in software-defined storage platforms, on Cloud Volumes ONTAP, or on ONTAP Select. It provides high availability in environments with directly attached (non-shared) disks on top of commodity servers, or at FAS and AFF platforms in Local SyncMirror or MetroCluster configurations. SyncMirror is a free feature.

SnapLock[edit]

SnapLock implements Write Once Read Many (WORM) functionality on magnetic and SSD disks instead of to optical media so that data cannot be deleted until its retention period has been reached. SnapLock exists in two modes: compliance and enterprise. Compliance mode was designed to assist organizations in implementing a comprehensive archival solution that meets strict regulatory retention requirements, such as regulations dictated by the SEC 17a-4(f) rule, FINRA, HIPAA, CFTC Rule 1.31(b), DACH, Sarbanes-Oxley, GDPR, Check 21, EU Data Protection Directive 95/46/EC, NF Z 42-013/NF Z 42-020, Basel III, MiFID, Patriot Act, Graham-Leach-Bliley Act and etc. Records and files committed to WORM storage on a SnapLock Compliance volume cannot be altered or deleted before the expiration of their retention period. Moreover, a SnapLock Compliance volume cannot be destroyed until all data has reached the end of its retention period. SnapLock is a licensed feature.

SnapLock Enterprise is geared toward assisting organizations that are more self-regulated and want more flexibility in protecting digital assets with WORM-type data storage. Data stored as WORM on a SnapLock Enterprise volume is protected from alteration or modification. There is one main difference from SnapLock Compliance: as the files being stored are not for strict regulatory compliance, a SnapLock Enterprise volume can be destroyed by an administrator with root privileges on the ONTAP system containing the SnapLock Enterprise volume, even if the designed retention period has not yet passed. In both modes, the retention period can be extended, but not shortened, as this is incongruous with the concept of immutability. Also, NetApp's SnapLock data volumes are equipped with a tamper-proof compliance clock, which is used as a time reference to block forbidden operations on files, even if the system time tampered.

Starting with ONTAP 9.5 SnapLock supports Unified SnapMirror (XDP) engine, re-synchronization after fail-over without data loss, 1023 snapshots, efficiency mechanisms and clock synchronization in SDS ONTAP.

FabricPool[edit]

FabricPool tiering to S3

Available for SSD-only aggregates in FAS/AFF systems or in Cloud Volumes ONTAP on SSD media. Starting with ONTAP 9.4 FabricPool supported on ONTAP Select platform. Cloud Volumes ONTAP also supports HDD + S3 FabricPool configuration. Fabric Pool provides automatic storage tiering capability for cold data blocks from fast media (usually SSD) on ONTAP storage to cold media via object protocol to object storage such as S3 and back. Fabric Pool can be configured in two modes: One mode is used to migrate cold data blocks captured in snapshots, while the other mode is used to migrate cold data blocks in an active file system. FabricPool preserves offline deduplication & offline compression savings. Starting with ONTAP 9.4 introduced FabricPool 2.0 with ability to tier-off active file system data (by default 31-day data not been accessed) & support data compaction savings. The recommended ratio is 1:10 for inodes to data files. For clients connected to the ONTAP storage system, all the Fabric Pool. 2007-04-27. Archived from the original on 2013-01-30. Retrieved 2016-06-11.

Retrieved from 'https://en.wikipedia.org/w/index.php?title=ONTAP&oldid=917315938'

| Public | |

| Traded as |

|

|---|---|

| Industry | |

| Founded | 1992; 27 years ago |

| Founder | David Hitz James Lau Michael Malcolm |

| Headquarters | , |

Area served | Worldwide |

| George Kurian (CEO) Mike Nevens (chairman of the board) | |

| Products |

|

| Revenue | $6.15 billion (2019)[1] |

| $1.34 billion (2019)[1] | |

| $2.34 billion (2019)[1] | |

| Total assets | $10.03 billion (2018)[1] |

| Total equity | $9.98 billion (2018)[1] |

| 10,500[2] (2019) | |

| Website | www.netapp.com |

NetApp, Inc. is a hybrid cloud data services and data management company headquartered in Sunnyvale, California. It has ranked in the Fortune 500 since 2012.[3] Founded in 1992[4] with an IPO in 1995,[5] NetApp offers hybrid cloud data services for management of applications and data across cloud and on-premises environments.

- 1History

- 3Products

- 3.4HCI

- 3.5StorageGRID

- 3.7Converged Infrastructure

- 3.9Memory Accelerated Data

- 4Cloud Business

- 4.2Cloud Volumes Service

- 4.10NetApp IO

- 6Software Integrations

- 6.1Automation

- 8Reception

- 8.1Controversy

History[edit]

NetApp headquarters in Sunnyvale, California

NetApp was founded in 1992 by David Hitz, James Lau,[6] and Michael Malcolm[4][7] as Network Appliance, Inc.[8] At the time, its major competitor was Auspex Systems. In 1994, NetApp received venture capital funding from Sequoia Capital.[9] It had its initial public offering in 1995. NetApp thrived in the internet bubble years of the mid 1990s to 2001, during which the company grew to $1 billion in annual revenue. After the bubble burst, NetApp's revenues quickly declined to $800 million in its fiscal year 2002. Since then, the company's revenue has steadily climbed.

In 2006, NetApp sold the NetCache product line to Blue Coat Systems.[10]

In 2008, Network Appliance officially changed its legal name to NetApp, Inc. reflecting the nickname by which it was already well-known.[11]

On June 1, 2015, Tom Georgens stepped down as CEO and was replaced by George Kurian.[12]

In May 2018 NetApp announced its first End to End NVMe array called All Flash FAS A800 with release of ONTAP 9.4 software.[13] NetApp claims over 1.3 million IOPS at 500 microseconds per high-availability pair; Read throughput of up to 300GBps (Giga Byte per second) per all-flash 24 node cluster and 50% higher IOPS and up to 34% lower latency by upgrading previous model A700 with ONTAP 9.4.[14]In January 2019 Dave Hitz announced retirement from NetApp.

Acquisitions[edit]

- 1997 - Internet Middleware (IMC) acquired for $10.5 million. IMC's web proxy caching software became the NetCache product line (which was resold in 2006).

- 2004 - Spinnaker Networks acquired for $300 million. Technologies from Spinnaker integrated into Data ONTAP GX and first released in 2006, later Data ONTAP GX become Clustered Data ONTAP

- 2005 - Alacritus acquired for $11 million. The tape virtualization technology Alacritus brought to NetApp was integrated into the NetApp NearStore Virtual Tape Library (VTL) product line, introduced in 2006.

- 2005 - Decru: Storage security systems and key management.[15]

- 2006 - Topio acquired for $160 million. Software that helped replicate, recover, and protect data over any distance regardless of the underlying server or storage infrastructure. This technology became known as ReplicatorX (Open System SnapVault), and has since been abandoned.

- 2008 - Onaro acquired for $120 million. Storage service management software which helps customers manage storage more efficiently with guaranteed service levels for availability and performance. Onaro's SANscreen technology launched as such and probably later influencing NetApp OnCommand Insight.

- 2010 - Bycast acquired for between $20 million and $50 million. Technologies from Bycast gave start for StorageGRID product

- 2011 - Akorri acquired for $60 million. Cross-domain analysis and advanced analytics to help customers manage, optimize, and plan performance and utilization across their data center infrastructure.

- 2011 - Engenio (LSI) acquired for $480 million. Engenio external storage systems business unit of LSI Corporation. Launched as NetApp NetApp E-Series product line for

- 2012 - Bycast: Development of software for storage with the purpose of control on the petabyte level; global collections of images, videos, and records

- 2012 - Cache IQ: Development of NAS cache systems

- 2013 - IonGrid: A technology developer that allows iOS devices to access users and internal business applications through a secure connection

- 2014 - SteelStore: NetApp acquired Riverbed Technology's SteelStore line of data backup and protection products,[16] which it later renamed as AltaVault[17] and then to Cloud Backup

- 2015 - SolidFire: In December 2015 (closing in January 2016), NetApp acquired founded in 2009 flash storage vendor SolidFire for $870 million.[18] with its Active IQ software available for end users as web-based GUI service for monitoring and prediction of storage systems performance and availability

- 2017 - Plexistor: NetApp first announced the acquisition of a company and technology called Plexistor in May 2017. Technologies from Plexistor gave start for MAX Data product

- 2017 - Greenqloud with its Qstack product. A private startup company that created cloud services, orchestration and management platform for hybrid cloud and multi-cloud environments

- 2017 - Immersive Partner Solutions, a Littleton, Colo.-based developer of software to validate multiple converged infrastructures through their lifecycles

- 2018 - StackPointCloud: NetApp acquired StackPointCloud, a project for multi-cloud Kubernetes as-a-service and a contributor to the Kubernetes which gave start for Kubernetes Service product

- 2019 - Cognigo: Israeli AI-driven data compliance and security supplier

Competition[edit]

NetApp competes in the computer data storage hardware industry.[19] In 2009, NetApp ranked second in market capitalization in its industry behind EMC Corporation, now Dell EMC, and ahead of Seagate Technology, Western Digital, Brocade, Imation, and Quantum.[20] In total revenue of 2009, NetApp ranked behind EMC, Seagate, Western Digital, and ahead of Imation, Brocade, Xyratex, and Hutchinson Technology.[21] According to a 2014 IDC report, NetApp ranked second in the network storage industry 'Big 5's list', behind EMC(DELL), and ahead of IBM, HP and Hitachi.[22] According to Gartner’s 2018 Magic Quadrant for Solid-State Arrays NetApp named a leader, behind only Pure Storage Systems.

Products[edit]

NetApp's OnCommand management software controls and automatesthe grid', with an ability to make and store multiple copies (replicas) of objects (also known as Replication Factor) or in Erasure Coding (EC) manner among cluster storage nodes with object granularity based on configured policies for data availability and durability purposes. StorageGRID stores metadata separately from the objects and allows users to configure data Life Cycle Management (ILM) policies on a per-object level to automatically satisfy and confirm changes in the cluster once changes introduced to the cluster like the cost of network usage, storage media usage changes a node was added or removed, etc. ONTAP, Cloud Backup, SANtricity, and Element X can replicate data to StorageGRID systems. SG6060 is optimized for high transactional throughput, MA, AI, and FabricPool.

StorageGRID on NetApp HCI[edit]

Solution Deployment of StorageGRID on NetApp HCI which can be deployed in three forms: Fully contained; High performance and scale; NetApp HCI and StorageGRID appliance.

E-Series[edit]

E5700 with 60 disk drives enclosure

RAID comparison with DDP

DDP components and data reconstruction process

Previously known as LSI Engenio RDAC after NetApp acquisition the product renamed to NetApp E-Series. It is a general-purpose enterprise storage system with two controllers for SAN protocols such as Fibre Channel, iSCSI, SAS and InfiniBand (includes SRP, iSER, and NVMe over Fabrics protocol). NetApp E-Series platform uses proprietary OS SANtricity and proprietary RAID called Dynamic Disk Pool (DDP) alongside with traditional RAIDs like RAID 10, RAID 6, RAID 5, etc. In DDP pool each D-Stripe works similar to traditional RAID-4 and RAID-6 but on block level instead of entire disk level, therefore, have no dedicated parity drives. DDP compare to traditional RAID groups restores data from lost disk drive to multiple drives which provide a few times faster reconstruction time[28] while traditional RAIDs restores lost disk drive to a dedicated parity drive. Starting with SANtricity 11.50 E-Series systems EF570 and E5700 support NVMe over Ethernet (RoCEv2) with 100Gbps Ethernet ports and NVMe over InfiniBand. Sync and async mirroring are supported with SANtricity 11.50. SANtricity Unified Manager is a web-based manager that supports up to 500 EF/E-Series arrays and supports LDAP, RBAC, CA & SSL for authorization & authentication. In August 2019 NetApp announced E600 with support for NVMe/IB, NVMe/RoCE, NVMe/FC protocols, up to 44GBps of bandwidth and full-function embedded REST API.

Converged Infrastructure[edit]

FlexPod, nFlex and ONTAP AI are commercial names for Converged Infrastructure (CI). Converged Infrastructures are joint products of a few vendors and consists from 3 main hardware components: computing servers, switches (in some cases switches are not necessary) and NetApp storage systems:

- FlexPod based on Cisco Servers and Cisco Nexus switches

- nFlex based on Fujitsu Servers with Extreme Networks switching

- ONTAP AI using NVIDIA supercomputers with Cisco Nexus switches.

Converged Infrastructures have tested and validated design configurations from vendors available to end users and typically include popular infrastructure software like Docker Enterprise Edition (EE), Red Hat OpenStack Platform, VMware vSphere, Microsoft Servers and Hyper-V, SQL, Exchange, Oracle VM and Oracle DB, Citrix Xen, KVM, OpenStack, SAP HANA etc. and might include self-service portals PaaS or IaaS like Cisco UCS Director (UCSD) or others. FlexPod, nFlex and ONTAP AI allows an end user to modify validated design and add or remove some of the components of the Converged Infrastructure while not all of the other Converged Infrastructures from competitors allows modification.

FlexPod[edit]

FlexPod Converged Infrastracture

There are few FlexPod types: FlexPod Datacenter, FlexPod Select, FlexPod Express (Small, Medium, Large and UCS-managed), FlexPod SF. FlexPod Datacenter usually using Nexus switches like 5000, 7000 & 9000; Cisco UCS Blade Servers; Mid-Range or High-End NetApp FAS or AFF systems. FlexPod Select often used with BigData framework software like Hortonworks & Cloudera, the architecture using Cisco UCS Rack Servers with direct attached NetApp E-Series and in some configurations in addition to that include Low-End FAS systems & Cisco Switches. FlexPod Express usually have Low-End NetApp FAS/AFF systems; Small, Medium, Large using Cisco UCS rack servers with Nexus 3000 switches while UCS-managed FlexPod Express using Cisco Blade servers, may add rack servers and might include switches from Cisco or other vendors in the architecture. FlexPod SF has in its architecture Nexus 9000 switches, Cisco UCS Blade servers and NetApp SolidFier storage based on Cisco UCS rack servers. Cisco UCS Director used as the orchestrator for FlexPod for a self-service portal, workflow automation and billing platform to build PaaS & IaaS. FlexPod systems supported under the cooperative center of competence. NetApp Converged Systems Advisor (CSA) is a software-as-a-service (SaaS) platform that consists of an on-premises agent and a cloud-based portal. Converged Systems Advisor validates the deployment of FlexPod infrastructure and provides continuous monitoring and notifications to ensure business continuity. CSA validates configs with an automated review for best-practice rules, monitoring FlexPod remotely for compliance with best practices, notifying administrators about recommendations for hardware and firmware compliance. Multi-Pod is a FlexPod Datacenter solution with a FAS or AFF system leveraging MetroCluster technology for stretching storage system between two sites. NetApp and Cisco looking to incorporate NetApp MAX Data product into FlexPod solutions once persistent memory technology will be available in UCS servers.FlexPod Datacenter has the biggest variety of designed and validated by Cisco and NetApp architectures and applications including:

- Microsoft: SQL, Exchange, SharePoint

- Hypervisors: Microsoft Hyper-V, VMware vSphere, Citrix XenServer

- Red Hat Enterprise Linux OpenStack, Citrix XenDesktop/XenApp, Docker Datacenter for Container Management

- IBM Cloud Private, Cisco Hybrid Cloud with Cisco CloudCenter, Microsoft Private Cloud, Citrix CloudPlatform, Apprenda PaaS

- SAP, Oracle Database, Oracle RAC on Oracle Linux, Oracle RAC on Oracle VM

- 3D Graphics Visualization with Citrix and NVIDIA GPU. FlexPod Datacenter for AI leveraging UCS servers with NVIDIA GPU.

- Epic EHR, MEDITECH EHR

FlexPod types:

- FlexPod Express (Small, Medium, Large and UCS-managed)

- FlexPod Datacenter

- FlexPod SF

- FlexPod Select

nFlex[edit]

Is Converged infrastructure architecture with next key components: NetApp FAS/AFF systems, Extreme Networks data center switches and Fujitsu Primergy servers. nFlex is available with FUJITSU Software Enterprise Service Catalog Manager which provides a self-service portal for enterprises and service providers to automate the delivery of their software services, infrastructure services, or platform services to their employees and customers.

ONTAP AI[edit]

NetApp ONTAP AI

Latest Ontap Version

Converged infrastructure solution based on Cisco Nexus 3000 switches with 100Gbps ports, NetApp AFF storage systems, Nvidia DGX supercomputer servers interconnected by RDMA over RoCE, and developed for Deep Learning based on Docker containers with NetApp Docker Plugin Trident. With SnapMirror ONTAP AI solution can deliver data between edge computing, on-prem & the cloud as part of Data Fabric vision. ONTAP AI tested & validated for use with NFS & FlexGroup technologies. Combined technical support provided to the customers to all the architecture components.

OnCommand Insight[edit]

OnCommand Insight (OCI) is data center management software, capacity management, infrastructure analytics, centralized view into historical trends to forecast performance and capacity requirements and workload placement. OCI works with all NetApp storage systems and with competitor storage systems and in public cloud. Licensed server-based software.

Memory Accelerated Data[edit]

NetApp MAX Data for short. MAX Data is a proprietary Linuxfile system. NetApp officially announced MAX Data product availability at NetApp Insight 2018 in October and initially supported with RHEL Server 7.5, CentOS 7.5 and later for RHEL Server 7.6 and CentOS 7.6; With version 1.2 MAX Data supports in-guest VM configuration for VMware vShpere starting with 6.7 U2 and in-guest iSCSI connection. Also, NetApp did some KVM testing while containers are in the long-term roadmap. MAX Data also can be run in the cloud, but currently, nut supported. MAX Data came from the acquisition of Plexistor company in May 2017, Plexistor was founded in 2013 and positioned for Artificial Intelligence (Deep Learning & Machine Learning, Fraud Prevention/Banking), Real-Time Analytics/Trading Platforms, Data Wherehouse, IoT workloads and applications like MongoDB, Cassandra DB, Couchbase, Oracle DB, JDE type databases, Spark, etc but can run any applications which can use MAX Data file system. SAP HANA is currently qualifying whether MAX Data is suitable for the application but not supported yet.

Technology explanation[edit]

MAX Data consists of two tiers: Tier 1 and Tier 2, where cold data destaged to Tier 2 from Tier 1 or promoted from Tier 2 to Tier 1 when accessed, by MAX Data tiering algorithm, transparently to the applications. Currently, NetApp has recommended ratio for MAX Data as 1 to 25 for Tier 1 and Tier 2 respectively. MAX Data according to NetApp[29] will have two modes: to use MAX Data as a POSIX-compatible (the internal name is M1FS) file system or as API memory extension. Usage of MAX Data as POSIX FS does not require application modifications while API memory extension requires applications to be modified in order to utilize this functionality. MAX Data installed on Linux hosts to utilize ultra-low latency with persistent memory such as the Optane DC persistent memory (Optane DCPMM), NVDIMM or DRAM (when persistence not needed, for example for testing purposes) memory for Tier 1 and a NetApp AFF storage system for Tier 2. Optane DCPMM is the Intel brand name of products that use 3D XPoint technology and supported starting with MAX Data version 1.3. MAX FS is Persistent Memory based Filesystem (PM-based FS) which doesn't require application modification but also can be Direct Access enabled File system (DAX-enabled FS) for applications with optimization for Persistent Memory using SPDK. DAX is the mechanism that enables direct access to files stored in persistent memory arrays without the need to copy the data through the page cache.[30] Optane DCPMM requires to have the second generation of Intel Xeon Scalable CPU. MAX FS based on ZUFS (Zero-copy User-mode File System) interface for the user-space file system and do not need a dedicated kernel module, in comparison to filesystems like NTFS based on FUSE, or XFS & EXT4 directly connected to vfs, and therefore each FS require its kernel module. ZUFS operating in Linux userspace and consisting out of two modules called zuf (Zu Feeder) & zus (Zu Server) available as OpenSource; zuf is a kernel to userspace bridge. MAX Data used as Memory Extention is NUMA aware, byte-addressable near-memory latency (3-10 microseconds), optimized for random I/O, persistent memory and as FUSE-based filesystems do not have a page caching. MAX Data is integrated with NetApp ONTAP as part of Data Fabric vision. Currently, MAX Data works over SAN (FC & iSCSI) with AFF systems on the back end or SSD drives installed in the same server (starting with MAX Data 1.4), but NetApp considering to add: FC-NVMe protocol already available in ONTAP, iNVMe (NVMe over TCP) which is currently not supported by ONTAP, and NAS protocols which were tested on MAX Data with SDS instances of ONTAP, i.e., ONTAP Select and NetApp Cloud Volumes ONTAP according to Tech ONTAP podcast 154 on SoundCloud. NetApp is also looking into integration MAX Data with ONTAP FlexCache functionality for NFS caching in the long run. Also, NetApp tested MAX Data with Element OS but currently needs some server-side modifications and not supported. MAX Data provides persistent memory capabilities and include data protection capabilities like snapshots (MAX Snap) and SVM SnapMirror, Cloning, Data Tiering, and MAX Recovery found as a key functionality by enterprise companies running In-memory processing applications. MAX Recovery is technology copying data from memory of one (primary) server over RoCE network to memory of another (recovery) server, which allows recovering back to the original primary server data in seconds compared to hours if it were done from SSD media. For cluster connection for MAX Recovery, NetApp recommends using a dedicated RDMA over Ethernet (RoCEv2) 100Gbps network (also referred to as Persistent Memory over Fabrics, PMoF) which takes additionally 2-3 microseconds compare to single-node configurations. MAX Snap is a snapshot on MAX Data (Tier 1) level which can call another MAX Data service called Sync Snap to synchronize snapshot on Tier 2 so snapshot on storage system will capture and merge data into coherent, consistent snapshot on the storage system which later can be replicated with SnapMirror. MAX Data have APIs for application-consistent snapshots and plans to add functionality to integrate it with SnapCenter, but currently, doesn't have data protection software integrations. MAX Data have 3-month release cadence. MAX Data Memory API allows new applications to integrate with MAX Data for application-based and potentially more intelligent memory management, therefore needs application modification while MAX Data with data tiering algorithm does not need any application modifications if used as the POSIX file system. MAX Data have Pin functionality which can pin a file or a certain range within a file so it will not be going to destaged into the second tier. According to the Yahoo, Cloud Serving Benchmark showed on Tech Field Day on October 24, 2018, MAX Data reaching 3.7 more IOPS and 4-5 times less latency, compared to Linux XFS on NVMe flash in AWS EC2 instance and 64GB memory (with 32GB cache size for MongoDB) with 50% read modify write workload.

MAX data have a per-server license and does not depends on CPU, Memory or storage capacity. MAX Data has two different licensing tiers: Basic and Advanced. Advanced licensing include enterprise capabilities like snapshots, MAX Recovery, while Basic used only if performance with tiering functionality needed.

Cloud Business[edit]

Cloud Central is web-based GUI interface which provides multi-cloud application orchestration layer and single pane of glass based on Qstack for NetApp's cloud products like Cloud Volumes Service, Cloud Sync, Cloud Insights, Cloud Volumes ONTAP, SaaS Backup in multiple public cloud providers. NetApp offers some of its original or modified products as part of its Cloud portfolio alongside with new cloud-native products. For example, Cloud Volumes ONTAP, NPS are re-purposed for use in the cloud, while others are cloud-native. Netapp Cloud business, offerings, and products available on http://cloud.netapp.com portal.

Cloud Volumes ONTAP[edit]

Formally ONTAP Cloud. Cloud Volumes ONTAP (CVO) is software defined (SDS) version of ONTAP available in some public cloud providers like AWS, Azure, Google Cloud, and IBM Cloud. Cloud Volumes ONTAP is a virtual machine which is using commodity equipment and running ONTAP software as a service.

Cloud Volumes Service[edit]

Is service in Amazon AWS & Google GCP public cloud provider based on NetApp All-Flash FAS systems and ONTAP software, therefore will be able to synchronize data between cloud and on-premise NetApp systems. NetApp claims they will provide similar to AFF systems persistent performance SLA and also claims data could be moved in a way that will not generate huge ingress and egress charges using SnapMirror technology. Cloud Volumes Service will provide high availability for data with next protocols which can be consumed by containers: NFS v3, NFS v4, and SMB. Cloud Volumes Service support NetApp's Snapshots. Current maximum for Cloud Volumes Service is 100TB. In the Azure cloud currently only NFS available and called Azure NetApp Files. Cloud Volumes Service available as service in corresponding public cloud providers directly through their Marketplaces. NetApp claims them as reliable enterprise NAS services as in on-premises enterprise-grade data storage with predictable performance, management, security, and protection. NAS integrates with some other services in the public cloud providers. RESTful APIs will be available to automate NAS storage services such as provisioning, snapshots, and SnapMirror. As of January 2018 services for both public AWS and Azure in the preview stage.

Cloud Volumes On-Prem[edit]

Is storage on premises installed in a customer's data center and available to the customer as service. All work for updates & technical support provided by NetApp while customer consume space from the storage using web-based GUI or API.

NetApp Private Storage[edit]

NetApp Private Storage (NPS) is based on Equinix partner provided colocation service in its data centers for NetApp Storage Systems with 10 Gbit/s direct connection to public cloud providers like Azure and AWS etc. Some of Equinix data centers located in the same building with public cloud providers thus network connectivity to dedicated storage system is the same as with a storage service in a public cloud provider. Such configuration provide better performance compare to storage service in public cloud provider based on sharable commodity hardware and help to fit some companies with regulatory compliances which require strict data placement, security, availability and disaster recovery which public cloud provider could not provide. NPS storage could be connected to few cloud providers or on-premise infrastructure, thus in case of switching between clouds does not require data migration between them.

Kubernetes Service[edit]

NetApp Kubernetes Service (NKS) came from an acquisition of stackpoint.io it is a cloud-based SaaS application to deploy and manage Kubernetes clusters across cloud providers and on-prem:

- AWS

- Azure

- Google Cloud

- NetApp HCI

- VMware.

Kubernetes Service used for: creating and managing kubernetes clusters, managing access control, setting up and managing kubernetes clusters across clouds, creating Helm charts and deploying them from a GitHub repository. Kubernetes Service has features like: load balancing, applications migration across kubernetes clusters, load spreading across various clusters, Istio Traffic Management, Persistent Volume with NetApp Trident plugin for Docker & Kubernetes upgrades and HTTP API for automation. NetApp allows upgrading to newest Kubernetes with about 5-day delay after release.

SaaS Backup[edit]

NetApp SaaS Backup (Previously Cloud Control) is back up and recovery service for SaaS Microsoft Office 365 and Salesforce which provide extended, granular and custom retention capabilities of backup and recovery process compare to native cloud backup. NetApp planning to extend SaaS Backup and recovery service for Google Apps (G Suite), Slack and ServiceNow.

Cloud Sync[edit]

Cloud Sync is service for synchronizing any NAS storage system with another NAS storage, an Object Storage like Amazon S3 or NetApp Storage GRID using an object protocol.

Cloud Insights[edit]

SaaS application for monitoring infrastructure application stack for customers consuming cloud resources and also build for the dynamic nature of microservices and web-scale infrastructures. Cloud Insights uses similar to OnCommand Insight front-end API but different technology on the back-end. Cloud Insights available as a preview and will have three editions: Free, Standard and Pro. The free edition will be able to monitor NetApp storage & services using NetApp Active IQ with no cost.

NDAS[edit]